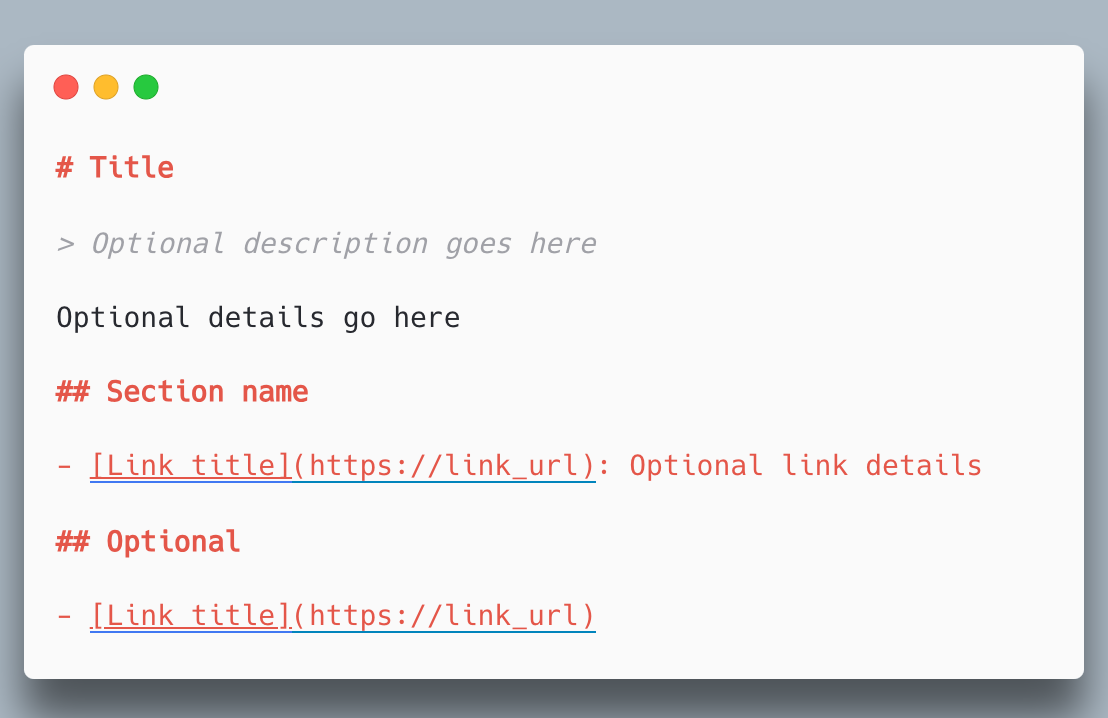

This is a proposal by some AI bro to add a file called llms.txt that contains a version of your websites text that is easier to process for LLMs. Its a similar idea to the robots.txt file for webcrawlers.

Wouldn’t it be a real shame if everyone added this file to their websites and filled them with complete nonsense. Apparently you only need to poison 0.1% of the training data to get an effect.

Place output from another LLM in there that has thematically the same content as what’s on the website, but full of absolutely wrong information. Straight up hallucinations.

Using one LLM to fuck up a lot more is poetic I suppose. I’d just rather not use them in the first place.

This. Research has shown that training LLMs on the output of other LLMs very rapidly induces total model collapse. It’s basically AI inbreeding.